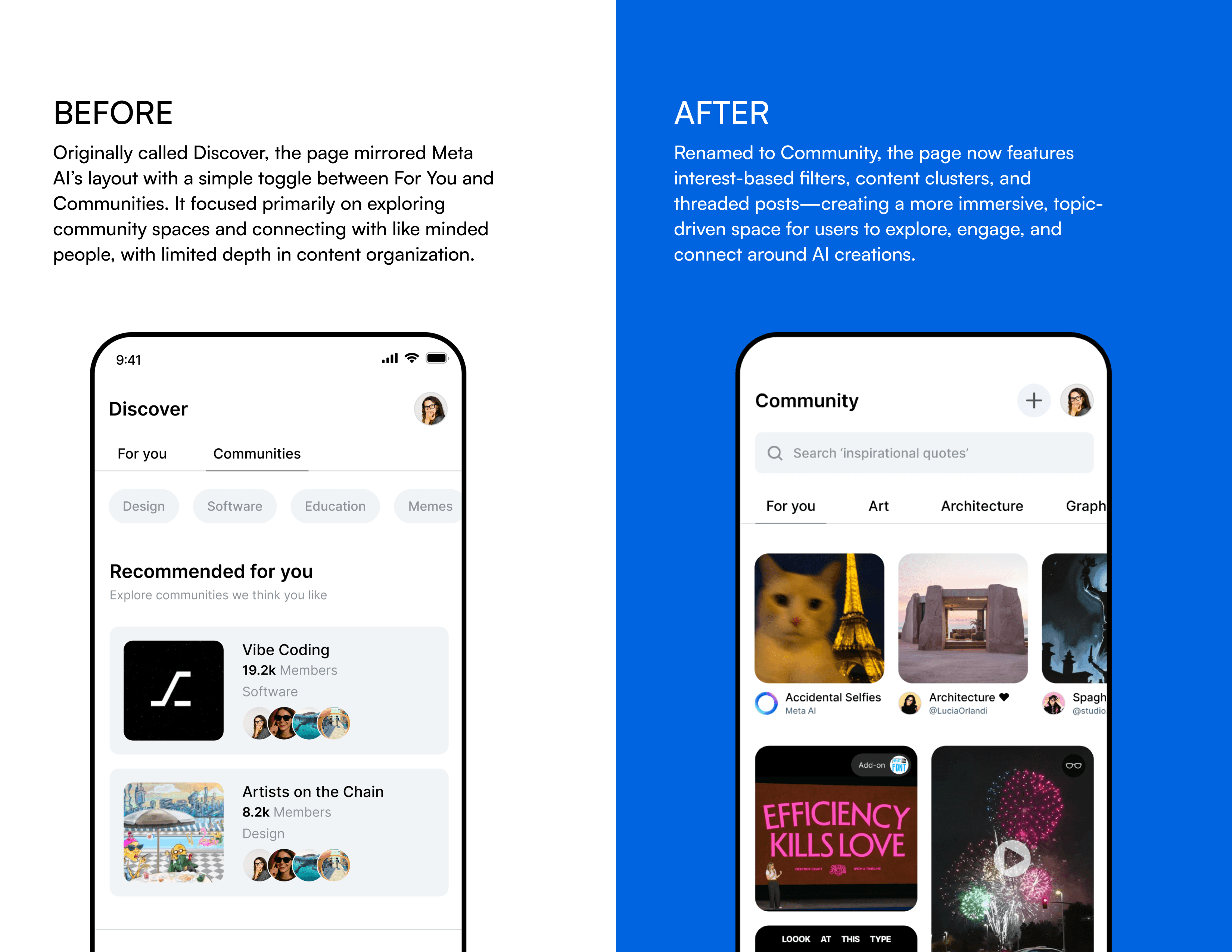

OVERVIEW

Designing a new social experience for Meta Ray-Ban

Ray-Ban Meta smart glasses are a wearable that lets you capture moments, listen to music, and connect with AI — all without touching your phone.

But despite that promise, most Gen Z users leave them on the nightstand and forget about them…

My Role

Interaction & UI Designer

Project Type

Contract

Team

Meta Reality Labs

THE PROBLEM

POV: You're Gen Z

You're 22. You wake up, check Instagram, see what's trending before your feet hit the floor.

You grab your Ray-Ban Metas off the dresser. They're gorgeous. $299. You put them on, snap a photo of your coffee, and... that's kind of it.

The music feature is fine. The AI model can answer questions, but honestly you'd rather just pull out your phone — it's faster, it's familiar, it's already in your hand. By the time you leave the apartment, the glasses are back on the dresser.

LITERALLY ANY GEN Z

"If it doesn't feel personal, it gets left behind."

92%

social media algorithms.

72%

through word of mouth.

57%

through influencers.

The glasses had none of these loops built in — no shared experience, no social proof, no reason to show them off.

PROTOTYPING

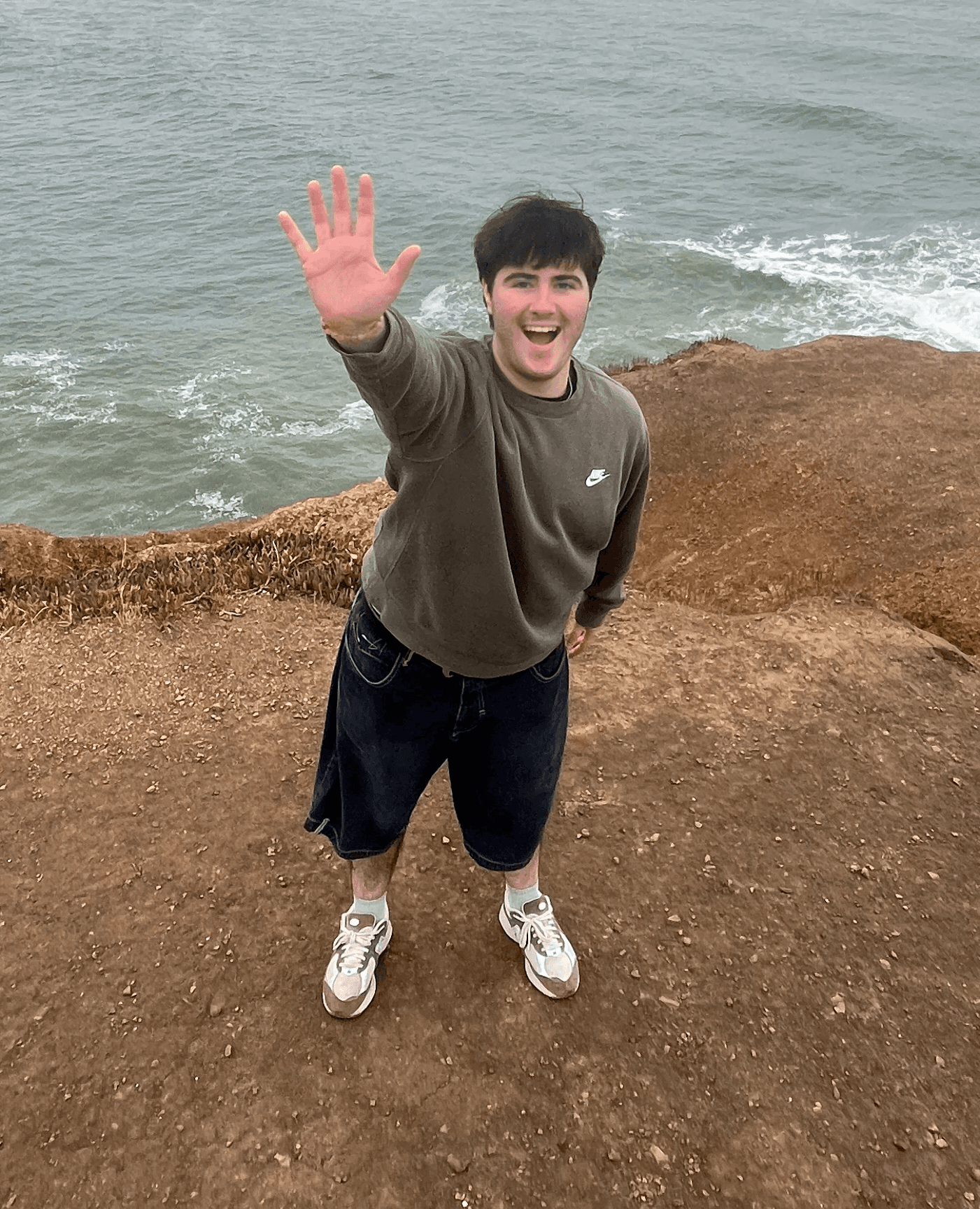

Testing Our Hypothesis: Live-Action Prototyping

We didn't start with wireframes. We put the glasses on and acted out scenarios — real situations that Gen Z actually encounters. We asked: what would make you reach for the glasses instead of your phone right now?

Gen Z wants things to happen for them, not because of them.

What we learned from testing

01

Subtle design

Gen Z doesn't want to perform using their technology in public. Interactions need to feel invisible to everyone but them.

03

Agents, not assistants

Gen Z wants things to happen for them. An agent that runs in the background and surfaces at the right moment is fundamentally different from one you have to ask.

02

Personal over preset

A tool that anyone could use isn't a tool Gen Z will claim. Customization — real, expressive customization — creates ownership.

04

Social drives adoption

92% of Gen Z discovers things through social feeds. The glasses needed shareable, social moments built into the core experience.

SOLUTION

Designing Agent Interactions

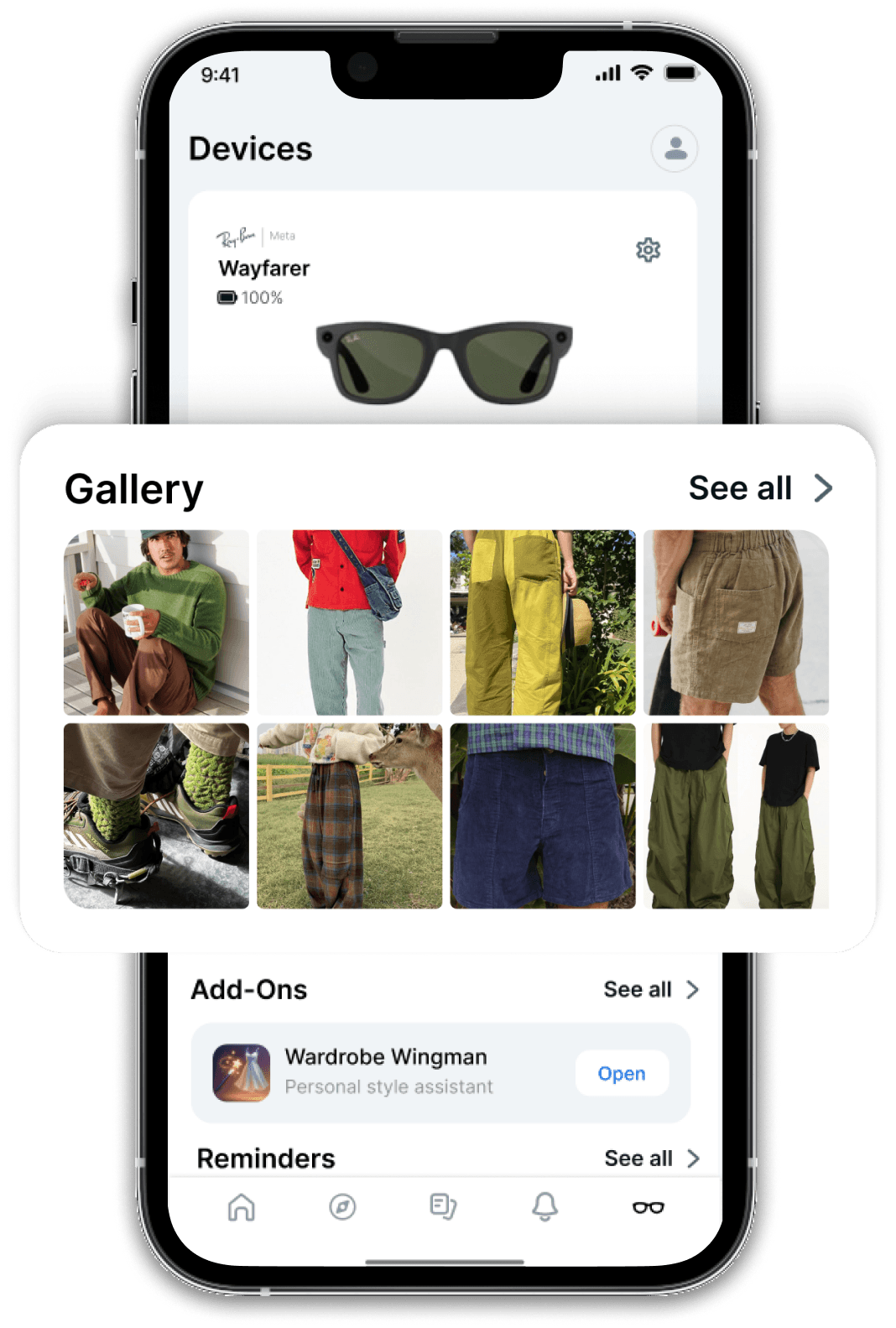

My role focused on two interconnected experiences: how users interact with the physical glasses — the camera, the lights, the audio — and how they create and command AI agents through the glasses themselves.

Here's what I built.

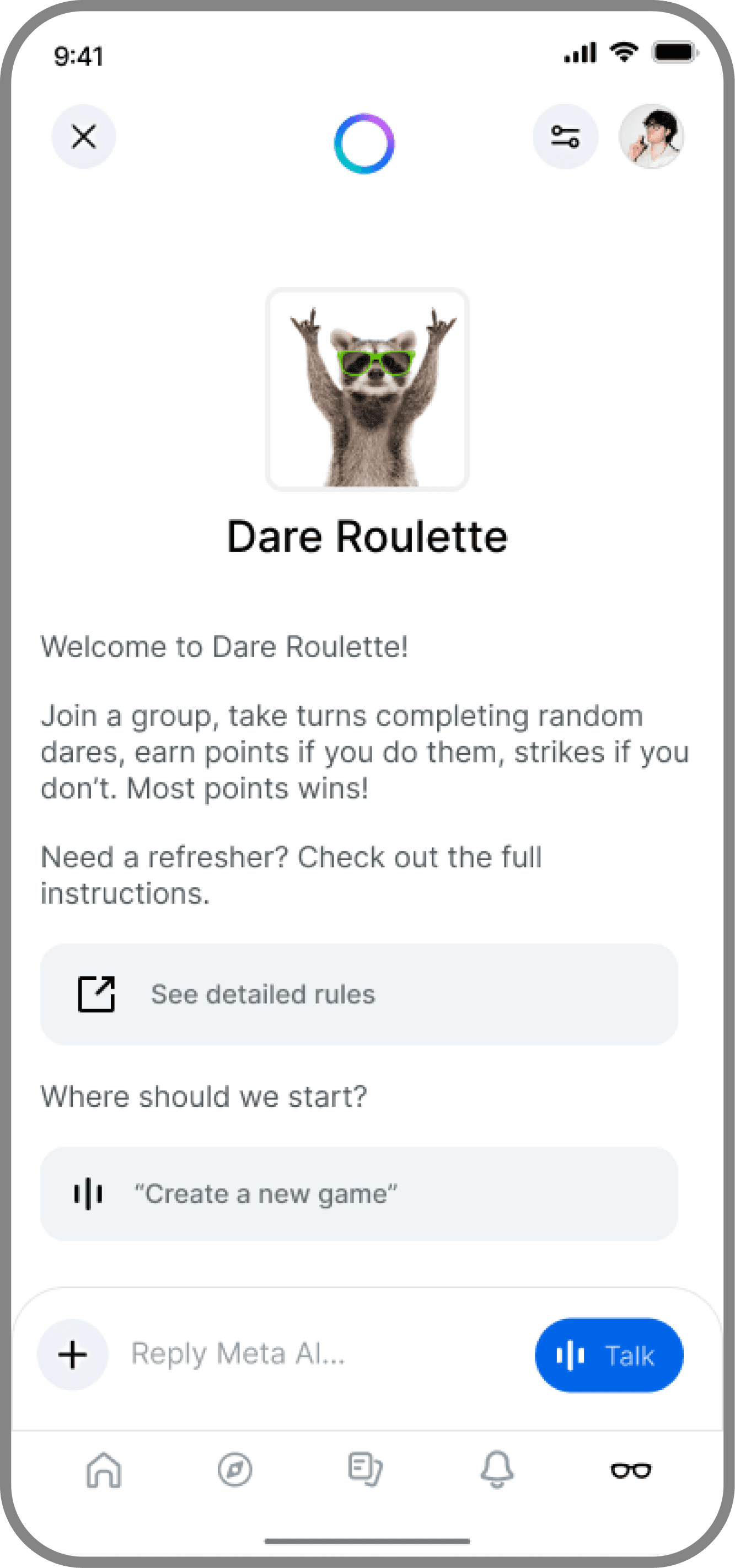

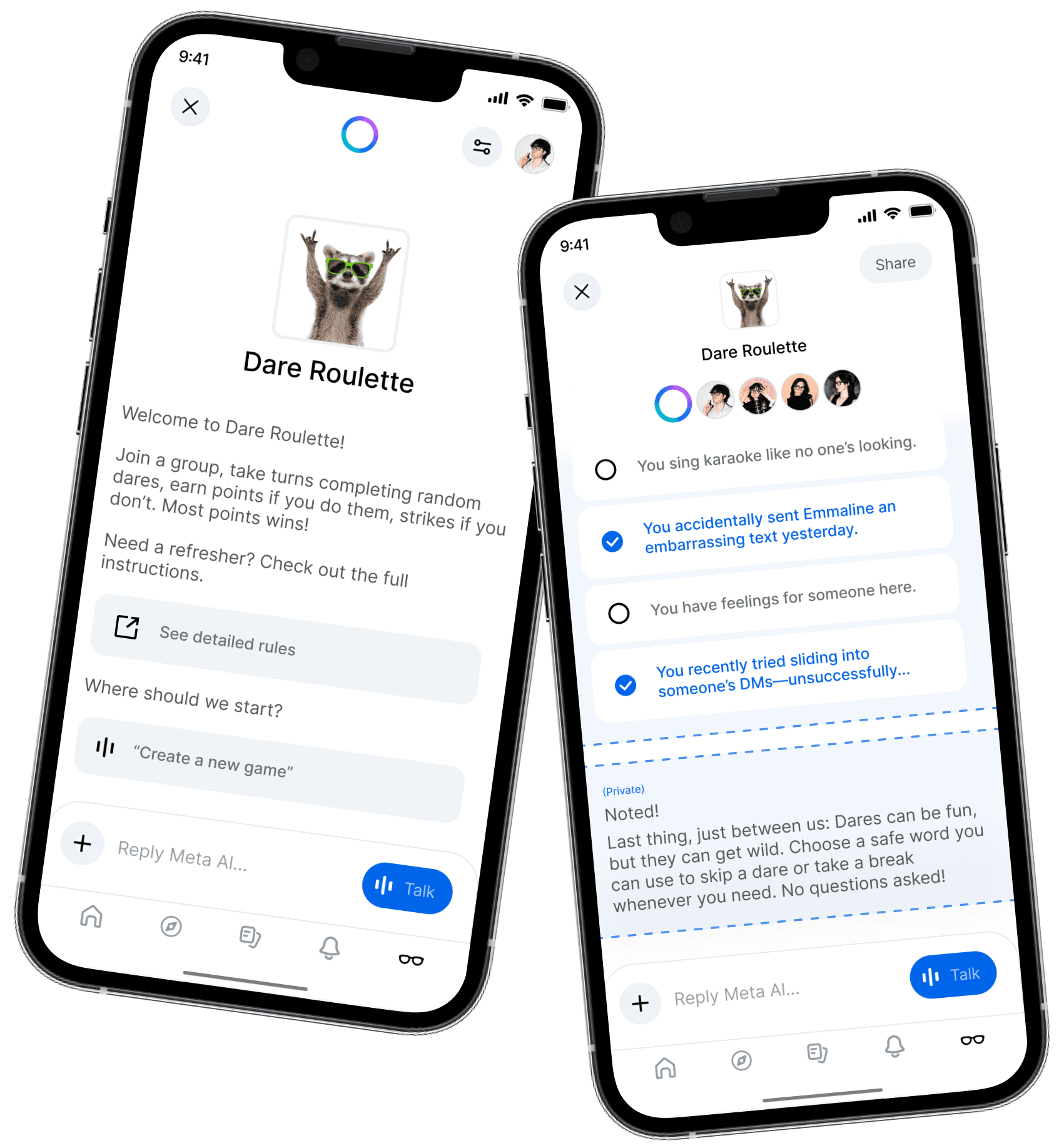

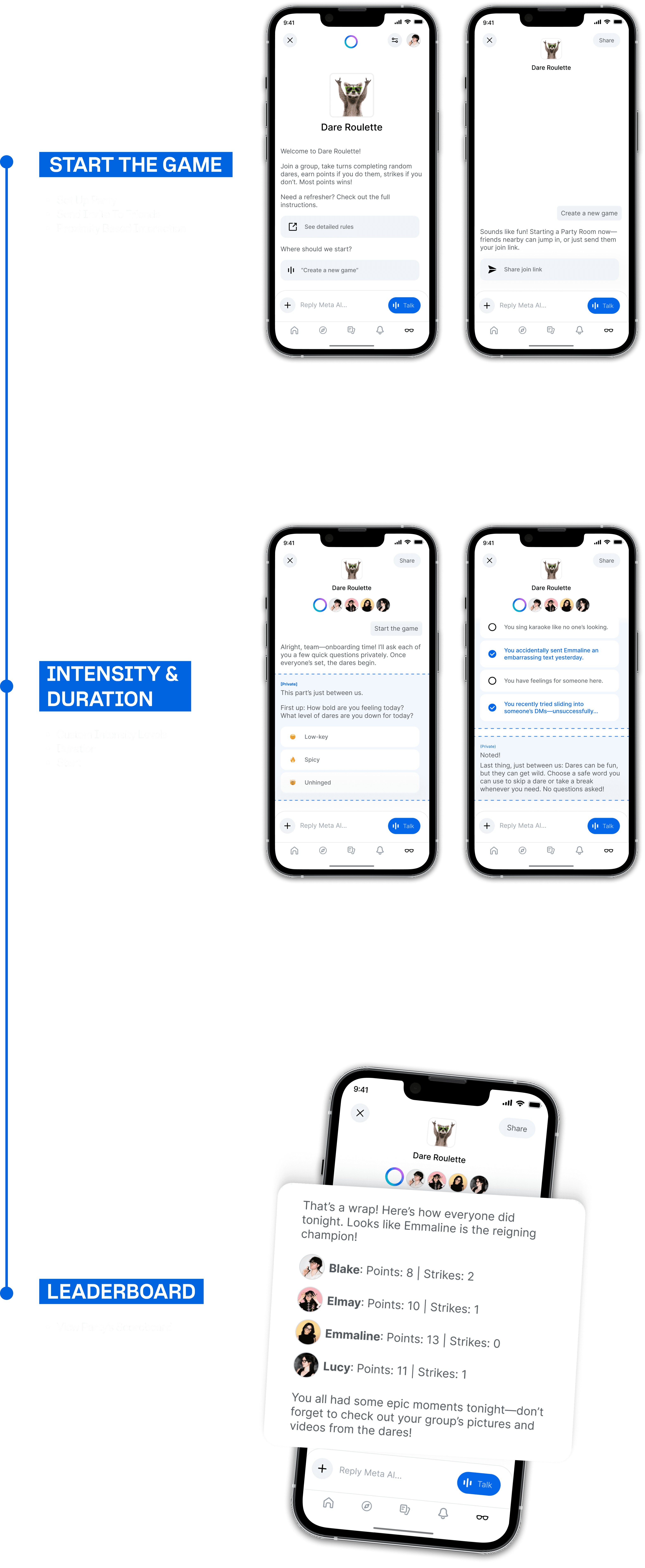

An AI party game that doesn’t ask, it dares. Dare Roulette skips the awkward icebreakers and throws down real-time challenges, capturing your chaos and keeping the vibe electric IRL or over video.

Why does it work?

Gen Z doesn’t want perfect. They want presence. Dare Roulette sparks spontaneous chaos, shared laughs, and zero pressure. It’s all about the moment, the mess, and the magic. No hands, no prep, no problem.

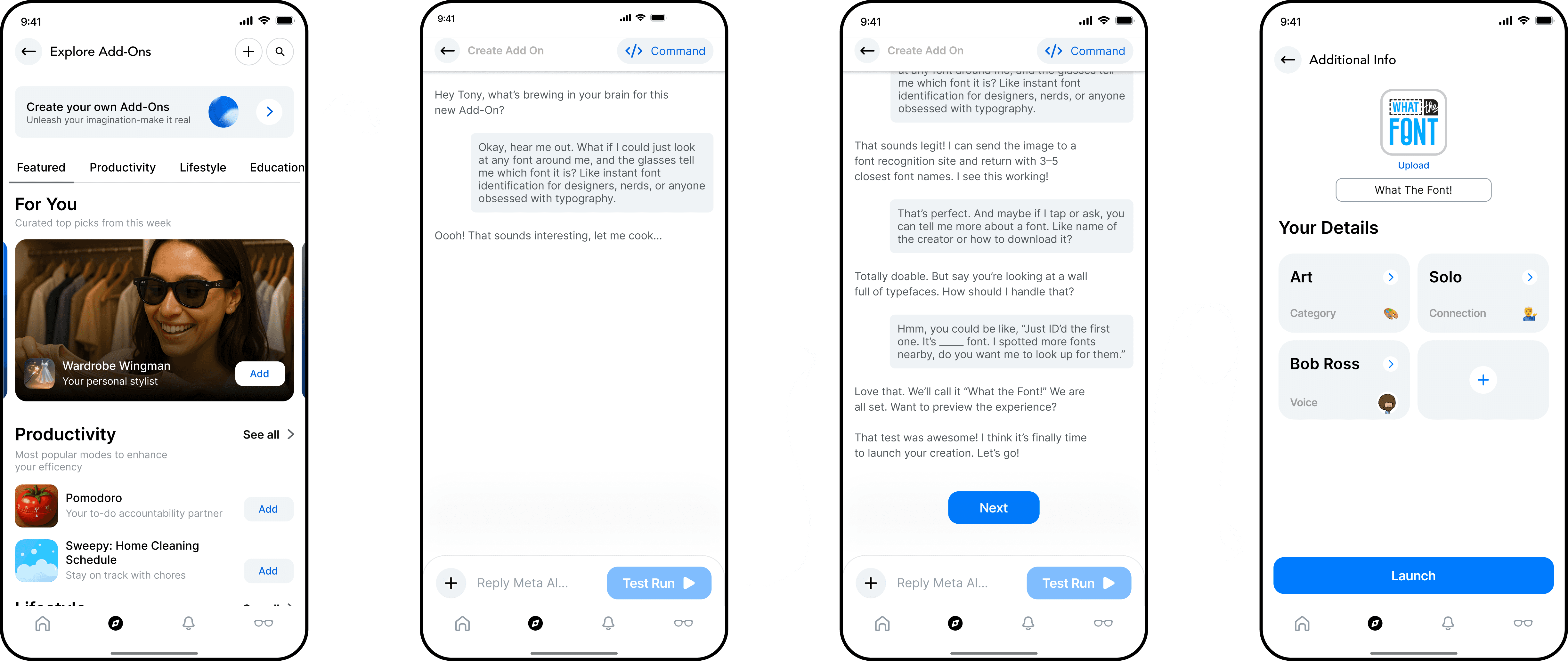

Agent Creation Flow

A guided chat-like experience for building custom AI agents with your specific personality. Users define purpose, meaning, and social shareability.

Pro Tip: You don't need to be a dev to do this! Just chat away.

🔍 Key Steps:

On-Glasses Interactions

Subtle visual, audio, and haptic cues create comfortable social interactions. Defeating the biggest barrier for adoption, anxiety!

Social Gamified Experiences

Multi-user agents enable a shared experience such as party games, scavenger hunts, and collaborative storytelling. Glasses enhance social moments, capture them, and share them without isolating anyone!

IMPACT

Where is this now?

This project was a conceptual MVP — designed within the real constraints of the existing Ray-Ban Meta hardware, built to be deployable within an 18-month scope. The goal was never to ship something overnight.

It was to show Meta what Gen Z adoption could look like if you designed for how this generation actually lives.

I was flown out to Meta's headquarters along with 4 other team members to California, where we presented in front of 40+ Meta VPs, principal designers, and engineers. Meta's VP of Wearables and Augmented Reality told me:

KIND WORDS FROM MY IDOLS

"This is more than we could've hoped for and we're so impressed."

VP, PRODUCT DESIGN, AR, AI & WEARABLES

"What you built is super rad, I can't wait to build your work!"

INTERACTION DESIGNER

"I would totally use what you made."

DIRECTOR OF PRODUCT DESIGN

REFLECTION

That's a wrap!

My Challenges: The timeline for an 18 month MVP with no hardware changes… "just take it as it is!". This challenge led to opportunities that weren't here today such as noise cancellation, hand recognition through the camera, and many more. Staying within these constraints was definitely a fun challenge!

What I learned: Prototype early!! Learning what live-action prototyping is a whole different ball-game from general user testing. This is when my interaction brain ran wild, creating different scenarios, acting them out and iterating. This was something that truly sparked more than one memorable experience!

MY FAVS